Microsoft Best Practices for SharePoint Data

According to the TechNet article “Software boundaries and limitations for SharePoint 2013”, each Microsoft SQL Server content database should not exceed 200 gigabytes (GB) in size. Even if some or most of the data has been offloaded to other storage locations using external or Microsoft SharePoint remote BLOB storage (EBS or RBS), “the total volume of remote BLOB [binary large object] storage and metadata in the content database must not exceed this limit.”

Customers often ask what purpose DocAve Storage Manager serves, then, if they are still “strongly recommended” to adhere to this limit.

Storage Manager externalizes unstructured SharePoint rbs data and stores it in network file shares and a wide variety of other configurable devices and locations, with the goal of reducing the amount of storage space required on expensive SQL servers while keeping the content fully accessible to end users. However, this limit would indicate that 200 GB must be set aside for each content database even if 180 GB of that data, for example, is no longer actually stored in SQL. How does Storage Manager help reduce SQL storage usage if this is the case?

One may note that the content database limit can be increased to 4 terabytes (TB), but only if the storage disks can provide between 0.25 and 2 IOPS (input/output operations per second). If externalized content must still be housed on such efficient servers – and only a relatively small amount of data can be offloaded to those servers until the minimum IOPS requirement is reached – then how does this help customers save significantly on storage costs?

Cases for Enabling a SharePoint RBS Provider

It is important to keep in mind that these figures from Microsoft are for their own FILESTREAM provider, and are not necessarily applicable to the AvePoint DocAve RBS provider. This is because all FILESTREAM operations happen on the same server as SQL Server, while DocAve is capable of shifting them to other servers. Where DocAve is concerned, these are more suggestions than hard requirements.

“Plan for RBS in SharePoint 2013”, which also applies to SharePoint 2010, is another TechNet article that administrators may find helpful in their evaluation of whether or not to utilize remote BLOB storage. In short, there are two primary use cases for externalizing data:

- Capture large files during data migrations or regular end-user file uploads

- Archive idle content to lower, less expensive tiers of storage

The first scenario is for active content, for which organizations should indeed conform as closely as possible to the low-latency, high-IOPS, and SQL database size requirements outlined in the aforementioned TechNet articles. One advantage here is that you can stop your database from growing much larger, preventing database bloat. As SQL Server databases become more bloated, they require more storage space and handle program transactions less efficiently. Another is that you can even improve read-write access speeds on those large files, as there are typically observable performance gains when files are moved out of SQL Server and onto external high-tier disks. And, of course, you can increase the size of your content databases several times over 200 GB.

Our white paper, Optimize SharePoint Storage with BLOB Externalization, goes into greater detail about the important factors to consider when you are looking to maximize BLOB performance in your SharePoint environment. The general guideline is: “Our testing has shown that BLOBs greater than 1 MB generally perform better when externalized – assuming the BLOB store itself performs well – whereas very small files smaller than 256 KB generally perform better when stored in the content database.”

However, the second scenario is by far the one that introduces greater cost savings. When it comes to idle content such as old versions or documents that have not been accessed in a long time, the IOPS requirement becomes much less important. In fact, even Microsoft’s 4 TB limit per content database no longer applies, because there is actually no explicit limit for “document archive” scenarios. This means that much more content can be externalized, as long as the files are being read and written infrequently. You should therefore be able to store prodigious amounts of BLOB data on cheaper storage, potentially saving you thousands or even millions of dollars per year.

In both scenarios, when BLOBs have been offloaded from SQL Server to remote storage locations, the administrator can then shrink the content databases so that they return close to their original size before all that content was uploaded to SharePoint. As a further advantage, this effectively reduces backup time, storage costs, and IOPS requirements.

SQL Server is required for many SharePoint activities beyond the reading and writing of BLOB data. Once BLOB-related I/O has been taken out of the equation, SQL Server resources can be reallocated to other tasks, resulting in improved responsiveness for those other activities. Meanwhile, you can postpone the need to purchase new hardware to support additional SQL servers.

Note: If you are running SharePoint 2013 and questioning whether RBS would still be useful or compatible in the age of shredded storage, please refer to John Hodges’ earlier blog post, “The Case for Remote BLOB Storage in Microsoft SharePoint 2013”.

Additional Benefits Offered by DocAve

As mentioned in the previous section, the AvePoint DocAve RBS provider is capable of overcoming some limitations inherent in Microsoft’s FILESTREAM provider. For instance, “Plan for RBS in SharePoint 2013” mentions that FILESTREAM does not support encrypting or compressing BLOB data, but DocAve Storage Manager has long offered such capabilities.

Reporting

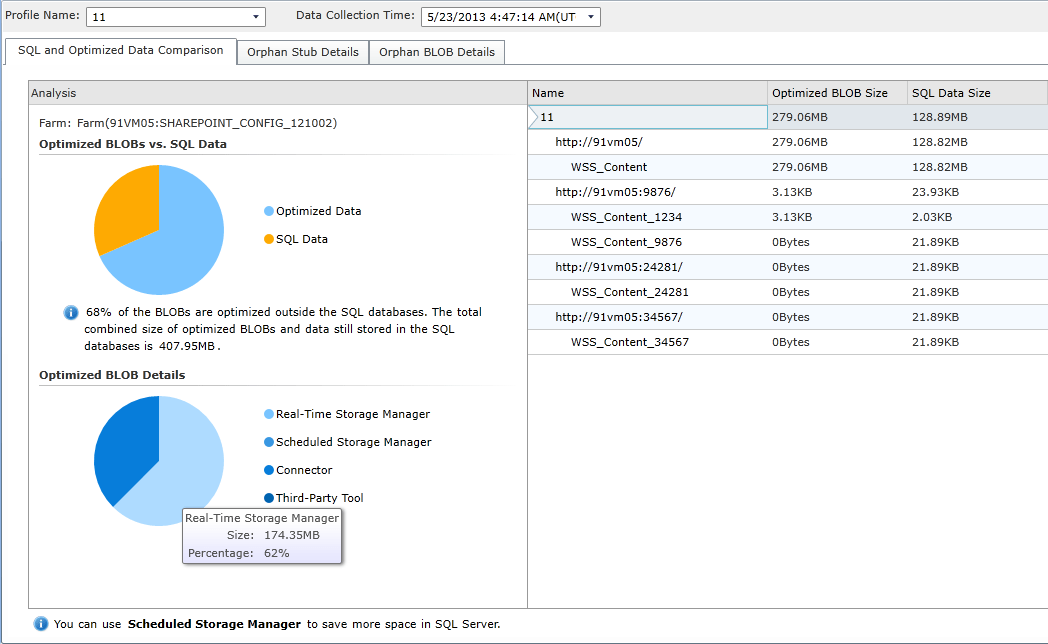

As you can imagine, once RBS has been enabled, your SQL environment will naturally become more difficult to manage. Externalized content will no longer be factored in the total size in SQL Server once the databases have been shrunk, and it will thus be more difficult to identify which content databases are at or approaching the 200 GB best-practice limit. In DocAve 6 Service Pack (SP) 3, DocAve Storage Manager offered a new Storage Report on segmentation of storage in your SharePoint environment (data residing in SQL databases vs. file servers), database consumption, each file’s date of last access, the externalization path of each BLOB, and more. The Storage Report gives you a clear, comprehensive overview of just how much SharePoint data you have and where it all resides, simplifying the task of storage administration.

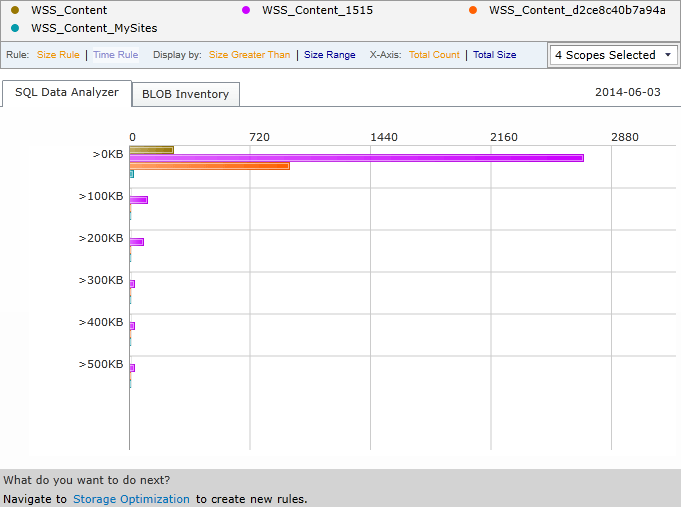

DocAve Report Center provides a few more storage-related reports. You can view trending

data on storage consumption over time for multiple site collections within a single graph, predict how much data a site collection will contain in the future, analyze how many non- externalized items exist within particular size ranges, and more.

Data Protection

When you have stubs of documents in SQL Server and the actual BLOBs themselves in disparate external storage locations, another critical requirement is backing up all of this data to meet service level agreements (SLAs).

The first cited TechNet article warns that when you create an environment with content databases exceeding 200 GB each (i.e. when you have enabled EBS or RBS), “[r]equirements for backup and restore may not be met by the native SharePoint Server 2013 backup.” Fortunately, DocAve Backup and Restore has you covered here. Not only can you back up all of your stub databases and overlarge content databases, but you can even include the BLOB stores themselves.

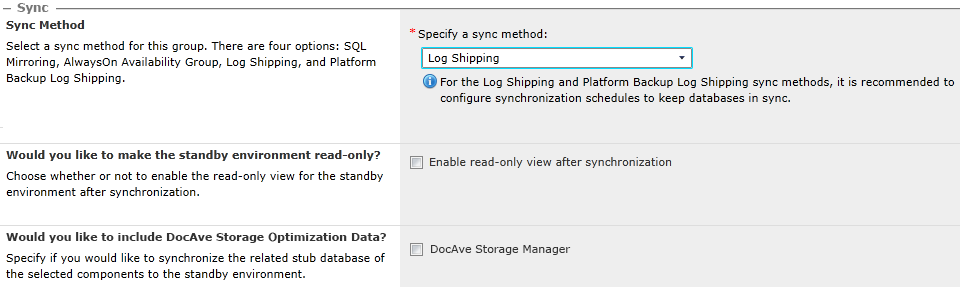

For high availability and disaster recovery, DocAve High Availability also supports protecting your SQL databases and Storage Manager BLOBs (the latter as of DocAve 6 SP 4).

Remote BLOB storage will invariably increase the complexity of your SharePoint environment, but when done effectively according to the best practices outlined in this blog post, it can lower storage infrastructure costs appreciably and even improve performance under certain circumstances. With DocAve’s numerous offerings for end-to-end SharePoint administration, most of the initial hurdles and ensuing concerns around implementing storage optimization have been simplified and streamlined.